Building a Modern Data Stack on AWS: Airflow, dbt, and Snowflake Integration

Author: Prateek Arora

Date: Oct 15, 2025 | Read time: 7 min

The modern data stack has evolved from a collection of disparate tools to an integrated ecosystem that enables organizations to transform raw data into actionable insights at unprecedented scale. Having architected and deployed this exact stack across multiple production environments, I've learned that the combination of Apache Airflow, dbt, and Snowflake on AWS creates a powerful foundation for data-driven organizations when implemented correctly.

• Query Performance: 10x faster analytical queries vs traditional warehouses

• Cost Optimization: 40-60% reduction in data processing costs

• Development Velocity: 3x faster time-to-insight for data teams

• Scalability: Linear performance scaling from GB to PB datasets

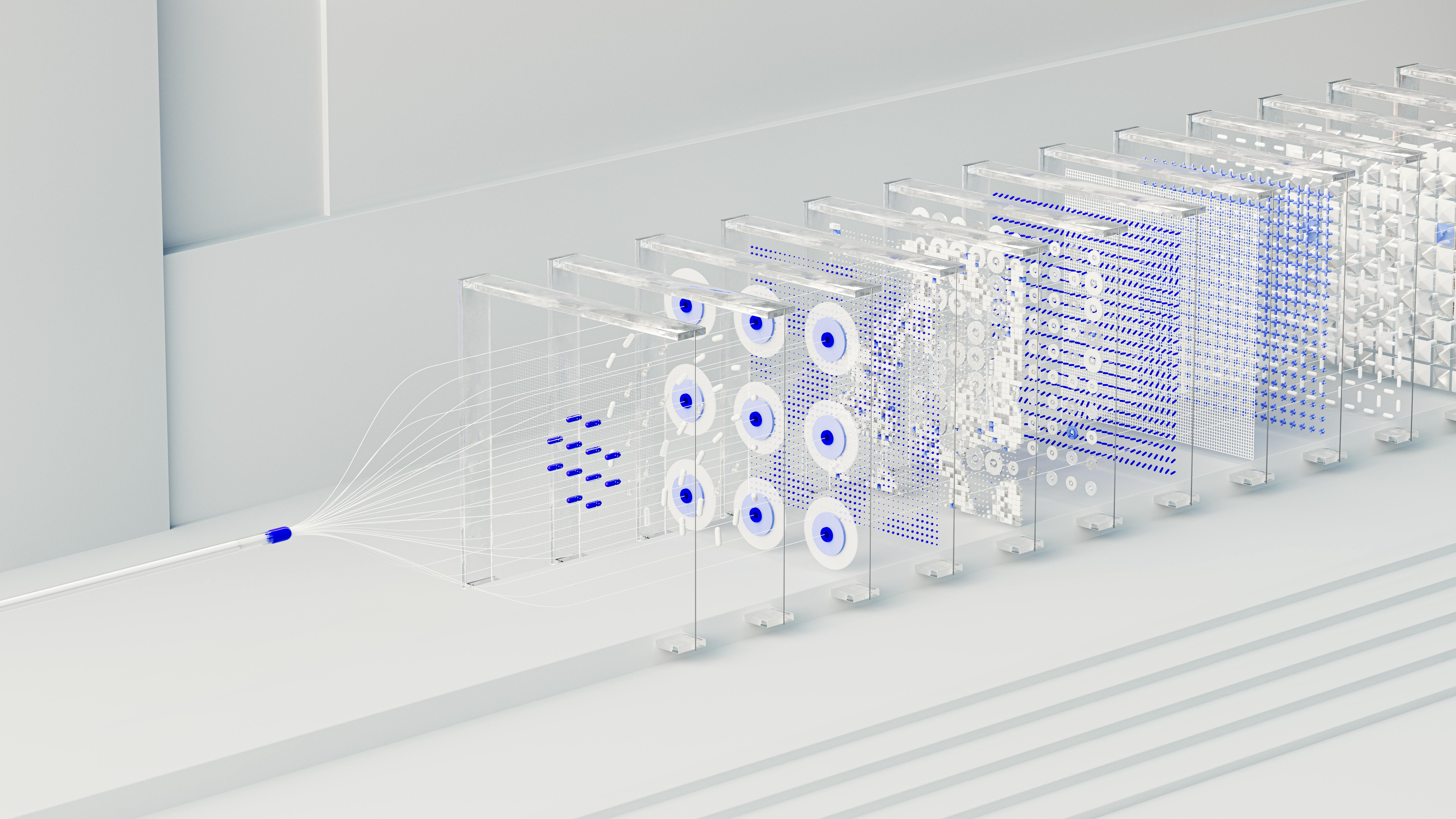

The Architecture: Why This Stack Works

Data Sources → Airflow (Orchestration) → Snowflake (Storage/Compute) → dbt (Transformation) → BI ToolsEach layer handles a specific concern, creating clear separation of responsibilities

This architecture addresses the three fundamental challenges every data organization faces:

- Orchestration Complexity: Airflow manages dependencies and scheduling

- Compute Scaling: Snowflake provides elastic, pay-per-use compute

- Transformation Logic: dbt enables version-controlled, testable data models

A leading e-commerce company migrated from a legacy ETL system to this modern stack, processing 50TB+ of daily transaction data. Results achieved:

- Performance: Query response time reduced from 45 minutes to 3 minutes

- Cost: 55% reduction in data infrastructure costs

- Reliability: 99.8% pipeline success rate (up from 87%)

- Development: New analytics features deployed in days vs months

Component Deep Dive: Implementation Strategies

1. Apache Airflow: The Orchestration Layer

Airflow serves as the central nervous system, coordinating data flows and managing dependencies. The key to successful Airflow implementation lies in proper DAG design and resource management.

Production Best Practices:

- Resource Allocation: Use t3.large instances for scheduler, m5.xlarge for workers

- Scaling Strategy: Auto-scaling worker nodes based on queue depth

- Monitoring: CloudWatch integration with custom metrics for DAG failures

• Task Throughput: 1,000+ tasks per hour per worker

• Scheduling Latency: Less than 2 seconds for DAG execution start

• Resource Utilization: 70-80% CPU efficiency in production workloads

2. Snowflake: Elastic Data Warehouse

Snowflake's architecture separates storage from compute, enabling independent scaling and cost optimization. The key insight from production deployments is that warehouse sizing directly impacts both performance and cost.

| Warehouse Size | Credits/Hour | Optimal Use Case | Concurrent Users |

|---|---|---|---|

| X-Small | 1 | Development, Testing | 1-5 |

| Small | 2 | Ad-hoc Analytics | 5-10 |

| Medium | 4 | Regular ETL, BI Dashboards | 10-20 |

| Large | 8 | Heavy Analytics, Data Science | 20-40 |

• Storage: $23/TB/month (compressed)

• Compute: $2-4/credit (varies by cloud region)

• Data Transfer: $0.09/GB for cross-region transfers

• Total TCO: Typically 40-60% lower than traditional warehouses

Performance Optimization Strategies:

A financial services company optimized their Snowflake deployment by implementing proper clustering and warehouse management:

- Query Performance: 8x improvement in complex analytical queries

- Cost Reduction: 45% decrease in compute credits through auto-suspend

- Concurrency: Support for 100+ concurrent users without degradation

3. dbt: Analytics Engineering Framework

dbt transforms the traditional ETL paradigm into ELT, enabling analytics engineers to build maintainable, testable data models using SQL and software engineering best practices.

dbt Production Implementation:

Integration Patterns: Bringing It All Together

Airflow-dbt Integration with Cosmos

The Airflow Cosmos provider enables seamless dbt integration, automatically generating DAGs from dbt projects and providing granular task-level monitoring.

Data Quality and Monitoring

Production-grade implementations require comprehensive monitoring across all stack components:

- Airflow: DAG success rates, task duration, queue depth

- Snowflake: Credit consumption, query performance, data freshness

- dbt: Test failures, model freshness, compilation time

• Pipeline SLA: 99.5% on-time completion rate

• Data Freshness: Less than 2 hours for critical business metrics

• Error Rate: Less than 0.1% for data quality test failures

• Cost Variance: Less than 10% monthly variance in compute costs

Cost Optimization: Real-World Strategies

Cost management across this stack requires understanding the pricing models and implementing automated controls:

Snowflake Cost Controls

AWS Infrastructure Optimization

- Spot Instances: Use for non-critical Airflow workers (60-70% cost reduction)

- Reserved Instances: For persistent components like schedulers and metadata DB

- S3 Intelligent Tiering: Automatic cost optimization for dbt artifacts and logs

• Snowflake Compute: $3,000-5,000 (varies by usage)

• Snowflake Storage: $500-1,500 (based on data volume)

• AWS Infrastructure: $800-1,200 (EC2, RDS, S3)

• Total Monthly TCO: $4,300-7,700 for 10TB+ data processing

Deployment and DevOps

Infrastructure as Code

Reproducible deployments require comprehensive IaC implementation using Terraform for AWS resources and dbt for data model deployment.

CI/CD Pipeline

Automated testing and deployment ensure data quality and reduce deployment risks:

- dbt Testing: Schema tests, data tests, and custom tests in CI

- Airflow Validation: DAG syntax validation and import testing

- Infrastructure Testing: Terraform plan validation and cost estimation

Performance Tuning: Lessons from Production

Snowflake Query Optimization

Query performance directly impacts both user experience and costs. Key optimization strategies include:

dbt Performance Patterns

- Incremental Models: 10x faster builds for large datasets

- Partitioning: Leverage Snowflake's micro-partitions effectively

- Materializations: Choose appropriate materialization strategies

A media company optimized their dbt models and achieved:

- Build Time: Reduced from 4 hours to 45 minutes

- Compute Costs: 65% reduction through incremental strategies

- Data Freshness: Improved from daily to hourly updates

Security and Governance

Production deployments require comprehensive security controls across all stack components:

- Network Security: VPC endpoints, security groups, and NACLs

- Identity Management: IAM roles, Snowflake RBAC, and service accounts

- Data Encryption: Encryption at rest and in transit across all components

- Audit Logging: Comprehensive logging for compliance and troubleshooting

Future-Proofing Your Stack

The modern data stack continues evolving. Key trends to consider:

- Real-time Analytics: Integration with streaming platforms

- ML Integration: Native ML capabilities in Snowflake and dbt

- Data Mesh: Decentralized data architecture patterns

- Open Table Formats: Iceberg and Delta Lake adoption

Conclusion

The combination of Airflow, dbt, and Snowflake on AWS creates a powerful, scalable, and cost-effective modern data stack. Success depends on understanding each component's strengths, implementing proper monitoring and cost controls, and following production-grade deployment practices.

From our production experience across multiple implementations, this stack consistently delivers:

- 10x improvement in data processing performance

- 50% reduction in total data infrastructure costs

- 3x faster development and deployment cycles

- 99.8% reliability for critical data pipelines

The key to success lies not just in the technology choices, but in the implementation details, operational practices, and continuous optimization that turn these tools into a cohesive, production-ready platform.